Request Information

Ready to find out what MSU Denver can do for you? We’ve got you covered.

I use a technique called Just-in-Time Teaching (JiTT), where students respond to conceptual questions the night before class (a.k.a. WarmUps) and I use their responses to shape how the in-person class time is used. Part of the magic is that by asking students to write answers in sentences (not multiple-choice) I get to see what they actually think about a topic before deciding how we’re going to spend our time. (You can learn more at this Ready Spotlight on JiTT.)

An interesting insight you get when using JiTT is that some patterns of student responses are stable from semester to semester, while others are idiosyncratic to a particular class. For example, there are WarmUp questions where, roughly speaking, I can reliably expect 40% of students to answer correctly, 20% to answer incorrectly for one reason, 20% to answer incorrectly for another reason, and 20% to show deeper confusion or other issues. For other questions, each class produces a unique distribution. Then there are times when external factors are clear, such as the big change in response patterns when I changed textbooks (a separate, fascinating topic).

This semester I’m teaching the Physics of Nature, a General Studies conceptual physics course. In the last third of the term, we begin to discuss Einstein’s relativity and quantum physics, which are really fun and very counterintuitive. This April I started seeing a lot more correct answers on the WarmUp questions than in the past. These are low-stakes warm-ups and students get full credit if they show thoughtful effort, right or wrong. I’d like to think I’ve done a good job convincing my students to just answer using their own thinking and not outsource the (brief) work to generative AI. It isn’t perfect, but earlier in the term I would have estimated that fewer than 10% of the responses this semester were AI-generated. A few times I have asked a student directly about their oddly accurate writing, and sometimes that led to a clear confession and apology.

Still, toward the end of a recent class, I realized I had something to say and warned the students I was about to go on a tangent. I said something like this:

“This is a weird time to be in higher ed — whether you’re a student or a professor.

Here’s what’s been puzzling me lately. When I look at your recent WarmUp responses, I see that after only reading the textbook, you seem to be understanding these topics significantly better than previous classes that answered the same questions.

But when I pause to reflect, I get nervous. I can’t tell if what I’m seeing is a real signal or an illusion. I know that some of you are using generative AI to answer these questions — even though you don’t need to get anything right to get full credit. I’d like to think I’ve done a good job convincing you that you don’t need AI for WarmUps, but the temptation is still there, and I completely understand why. Answering on your own has a real cost. To write even a semi-coherent response, you have to at least skim 1-5 pages of the book and then find the time and focus to respond, setting aside everything else in your life and the world. Despite my best efforts to make WarmUps a small and safe learning task, learning takes effort — and usually requires at least a little discomfort.

So, when I see that a particular misconception dropped from 40% in previous classes to 0% for you, what do I do? I could accept that as a real signal and shift my plans. We would spend less time on that misconception and move on sooner. Or I could disregard it because I don’t believe it. But that would violate a core reason I love WarmUps: I want to respond to what you, the actual human learners, need.”

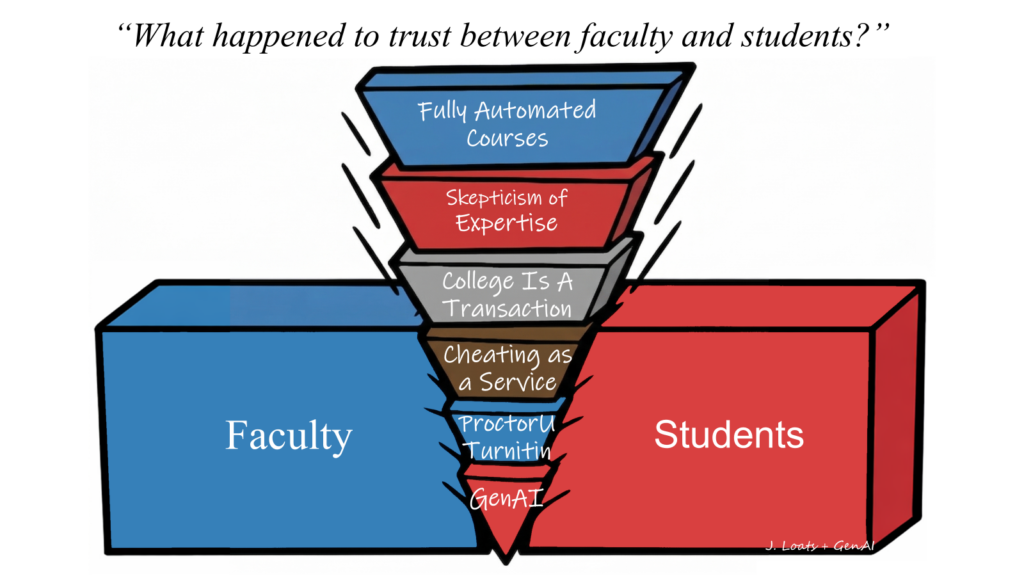

As I reflect on my own teaching and talk with faculty across this university and beyond, the issue that keeps surfacing is trust — and it runs in both directions.

Do students trust their professors to care what they actually think? Or will the professor treat them as a generic learner, with stereotypical beliefs, needs and desires? Will students trust that the work they’re assigned is meaningful, not just academic busywork? Do they trust that their grade will reflect their actual abilities, or perhaps the degree to which they grow in knowledge or skill? What happens when that trust is broken?

Do professors trust their students to respond using their own thinking, rather than repeating “what the professor wants to hear?” Or worse, having a chatbot write it. Do professors trust that students care about their own learning, rather than just checking boxes toward a diploma? What happens when that trust is broken?

For me, this is an usually philosophical and reflective bit of writing. But I’m going to lean into it.

These problems aren’t new, but they are “suddenly” huge, and they feel existential. Maybe that means it is time for radical change. What if every class “sacrificed” 2 weeks of content to focus on these trust issues? What if many/most/all classes shifted to an alternative grading structure that really put students in charge of deciding what they want out of each class they take? What if classes started out by having students vote on which Learning Objectives they think they will need in 6-months and/or in 5-years?

Change is coming. Do we leap to the front and try to steer, or get dragged along by the current?

![]()

Generative AI disclosure:

The editorial comic was conceived by me. The background drawing was generated by ChatGPT and then modified by me in PAINT.NET. The final product was made in PowerPoint, with text laid over the graphic, seizing the rare chance to use Comic Sans.

I did my first draft of this piece by speaking into Claude as I walked across campus. I asked Claude to transcribe what I said, doing light editing where it removed filler words. I then asked for help planning the structure of the overall piece, especially the ending which I often struggle with. I did more editing and writing all on my own.

After finishing the writing I used Claude to write a first draft of a short “teaser blurb.” But I didn’t like it much and ended up just writing my own this time.

Want to know more? Send me an email and we can chat!