Request Information

Ready to find out what MSU Denver can do for you? We’ve got you covered.

The CTLD has launched a new AI Literacy training designed to help students understand what generative AI (gAI) tools, specifically Large Language Models (LLMs) like ChatGPT, can and cannot do, and how to use them responsibly in academic and professional contexts. The training (1) introduces key concepts such as bias, hallucination, overconfidence, and responsible disclosure, culminating in a personal AI use plan that students can apply across courses. (Details and instructions on how to adopt the module into a course are on the Ready page.)

Different topics, courses and instructors at MSU Denver have different feelings, thoughts and policies about if and when generative AI can be used. Our students face a confusing landscape in which using gAI in what may seem like identical ways is allowed (or even required) in one class but strictly forbidden in another. Giving students a starting point for understanding and discussing gAI is key to helping them understand this variety of policies and processes.

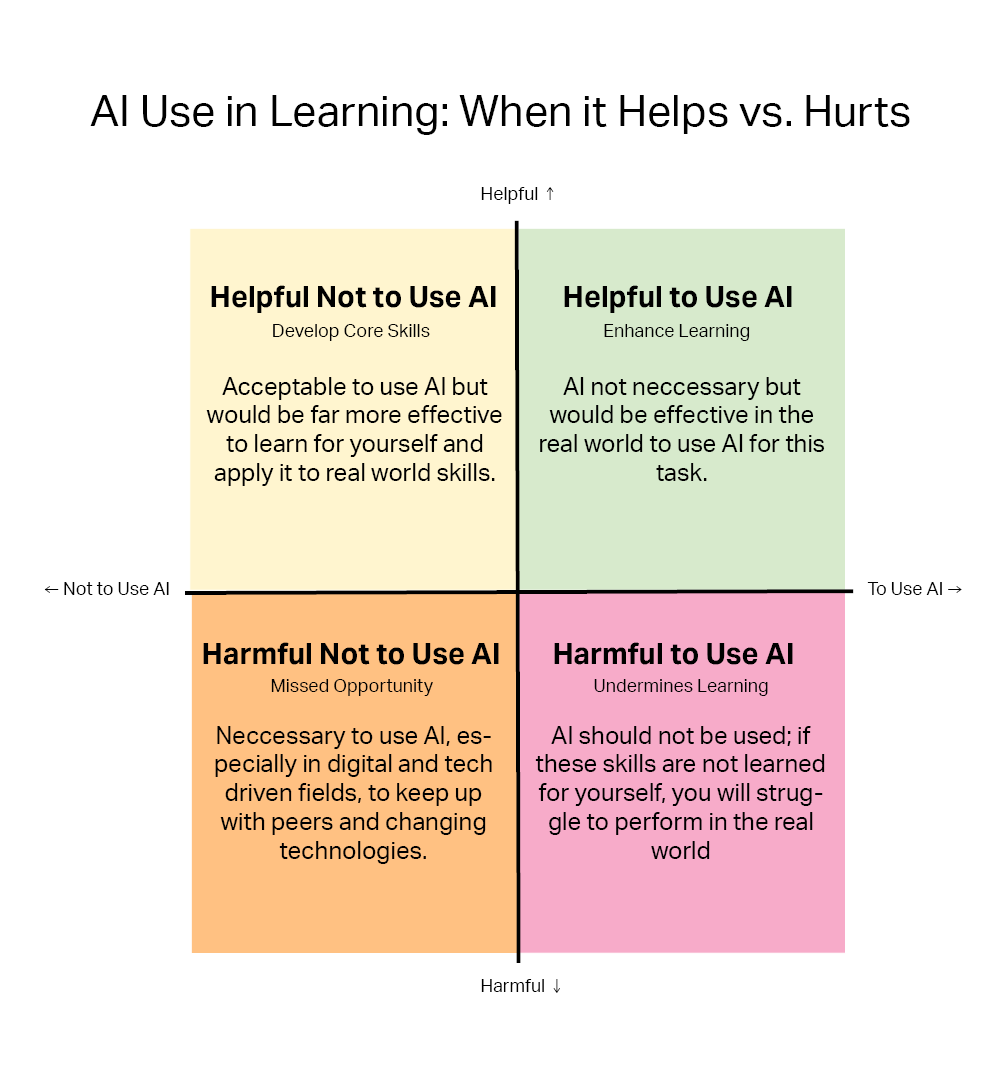

I want to highlight one part of the module in particular. When considering responsible use of gAI, students are introduced to a new visual framework that helps us all think critically about when gAI should or should not be used. This original work by the CTLD organizes decisions into four categories based on whether AI helps or hurts learning, and whether the task should or should not involve AI.

Figure 1: AI Use Quadrant Model. This model categorizes AI use into four quadrants, helping you evaluate when AI supports learning, when it should be avoided, and when overuse may cause harm. Source: OpenAI. (2025). ChatGPT (Model 5) [Large language model]. https://chat.openai.com/

This approach can be useful to all of us, not just our students.

To meet the diverse needs of faculty and courses, this training offers two assessment paths, each housed in their own module that the CTLD can help you import into your existing course:

Instructors can select the version that best fits their course design and needs. Both paths lead to the same learning outcomes: helping students develop a thoughtful, transparent, and responsible approach to AI use.

We hope this will be a useful resource for you and your students. To that end, we are interested in hearing what faculty have to say about this module and even more interested in hearing how it works in an actual class. You can review or adopt either version of the module by following the instructions found at the CTLD’s Ready page on this topic: Teaching AI Literacy: Preparing Students for Responsible Use

Featured image is based on a free to use photo by cottonbro studio.

![]() Generative AI disclosure: After writing this piece I used generative AI to write a first draft of the short “teaser blurb” that went out by email. The AI Impact Quadrant image was created using ChatGPT (as indicated). Want to know more? Send me an email and we can chat!

Generative AI disclosure: After writing this piece I used generative AI to write a first draft of the short “teaser blurb” that went out by email. The AI Impact Quadrant image was created using ChatGPT (as indicated). Want to know more? Send me an email and we can chat!